Short Takes: “Automatica: Robots Vs. Music”

Cinematographer Timur Civan harnesses an array of technology to help craft this video for musician Nigel Stanford.

Cinematographer Timur Civan harnesses an array of technology to help craft this video for musician Nigel Stanford.

Photos and frame grabs courtesy of the filmmakers

In 2013, New York-based cinematographer Timur Civan, director Shahir Daud, and New Zealand-based musician Nigel Stanford collaborated on “Cymatics: Science Vs. Sound,” a slick, stylish music video demonstrating the science of visualizing audio frequencies. Four years and 12 million YouTube views later, Stanford, Daud and Civan have reteamed for “Automatica: Robots Vs. Music,” a sequel of sorts to “Science Vs. Sound” — except this time, instead of adapting technology to transform the experience of listening to music, Stanford transforms the experience of making it in the context of “a future world where robots have some kind of artificial intelligence and have achieved a form of singularity,” he explains.

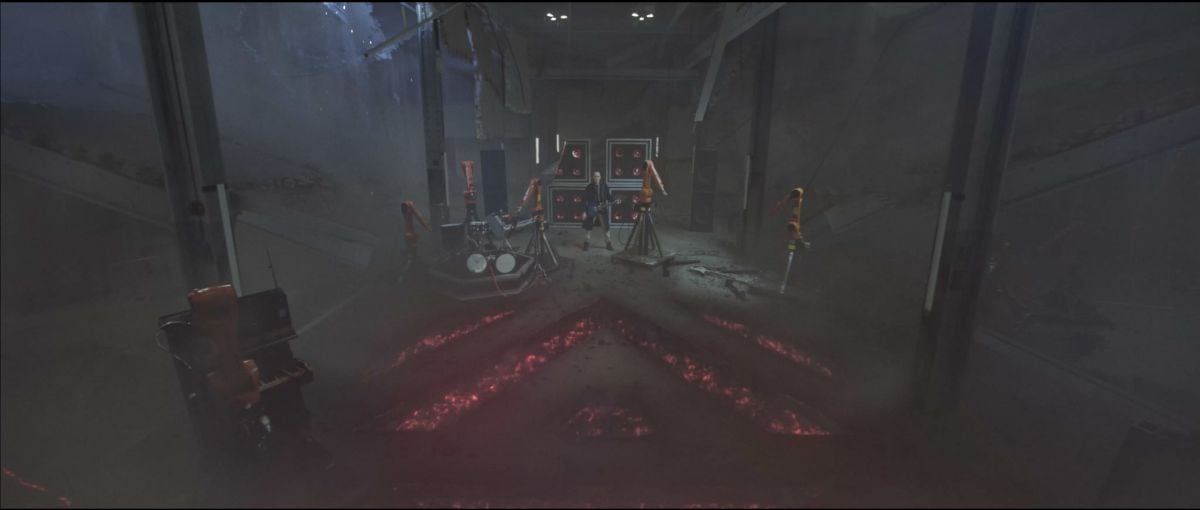

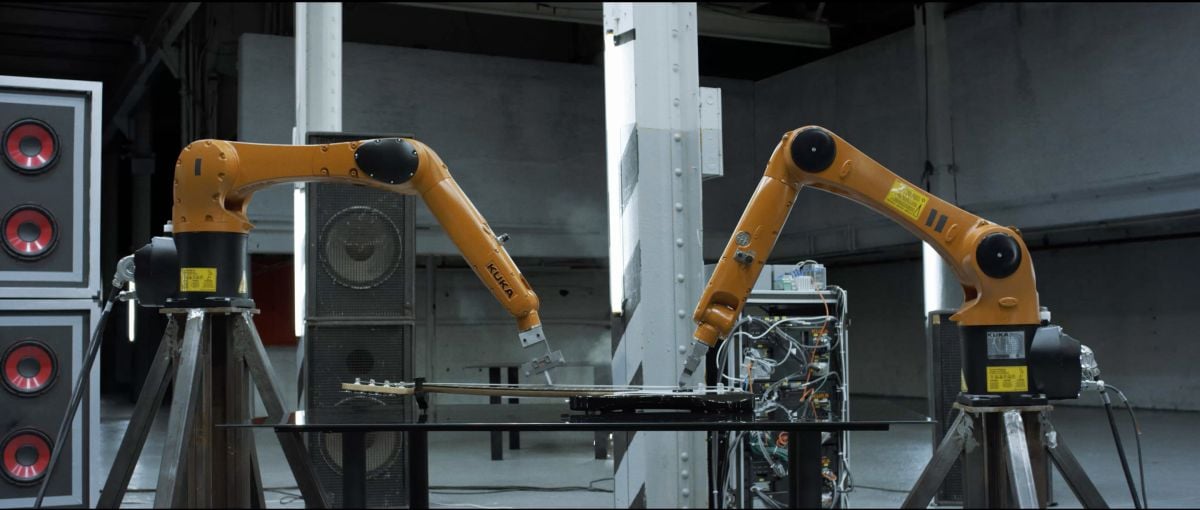

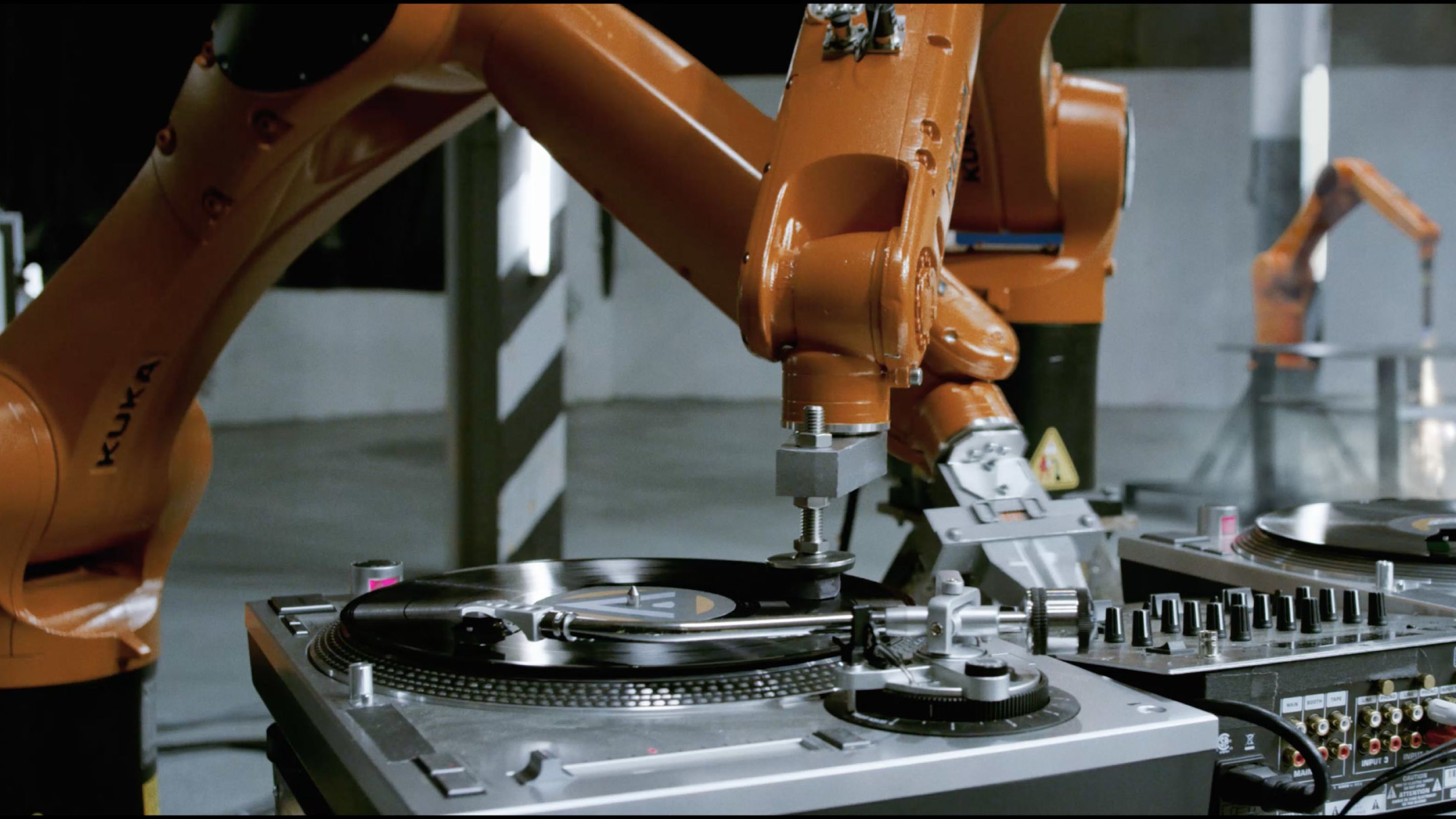

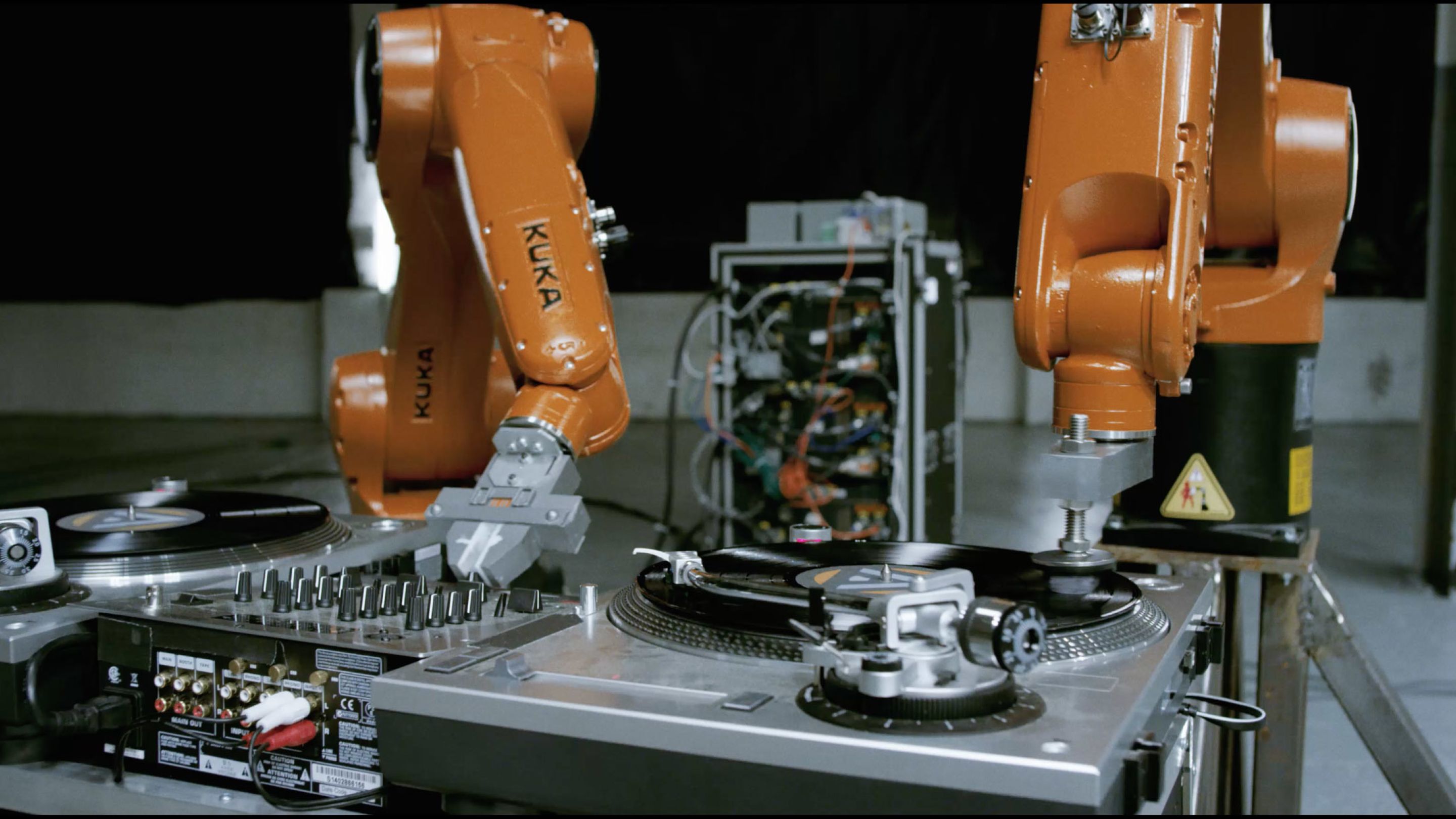

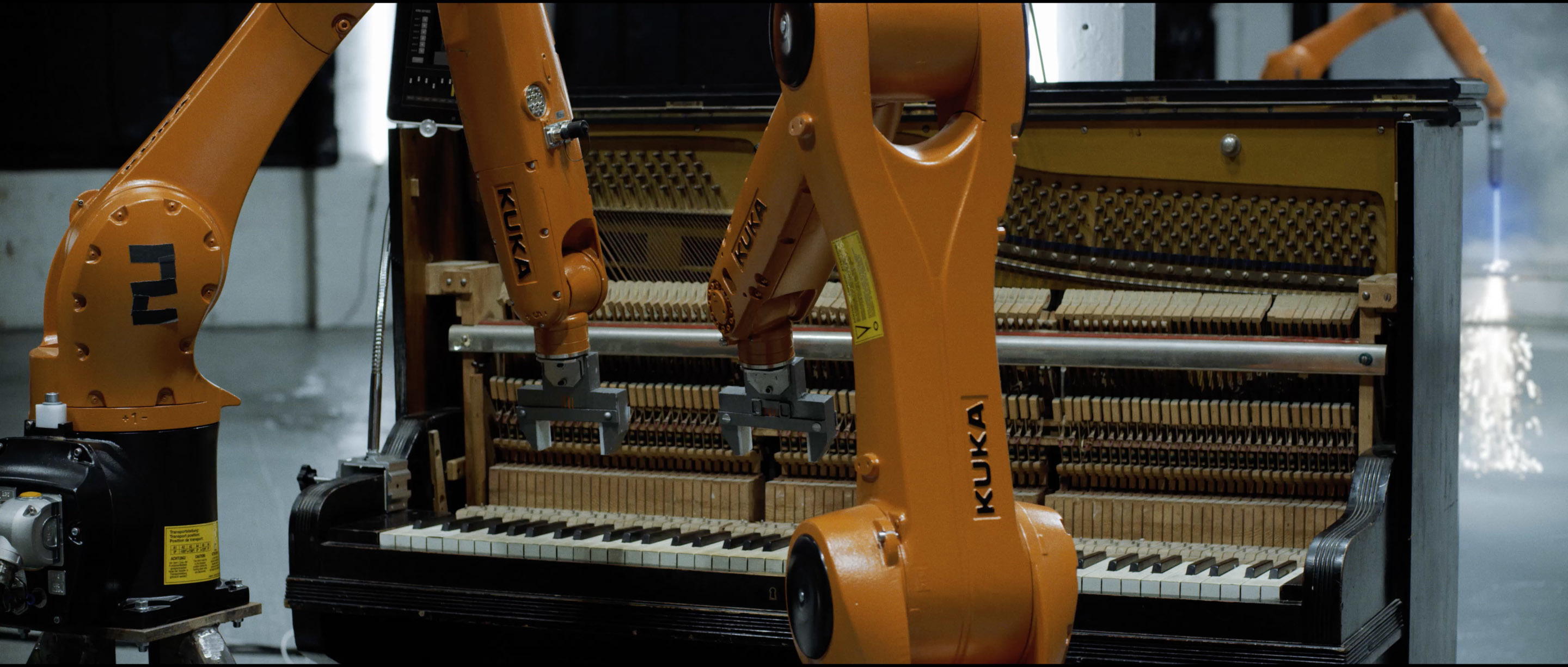

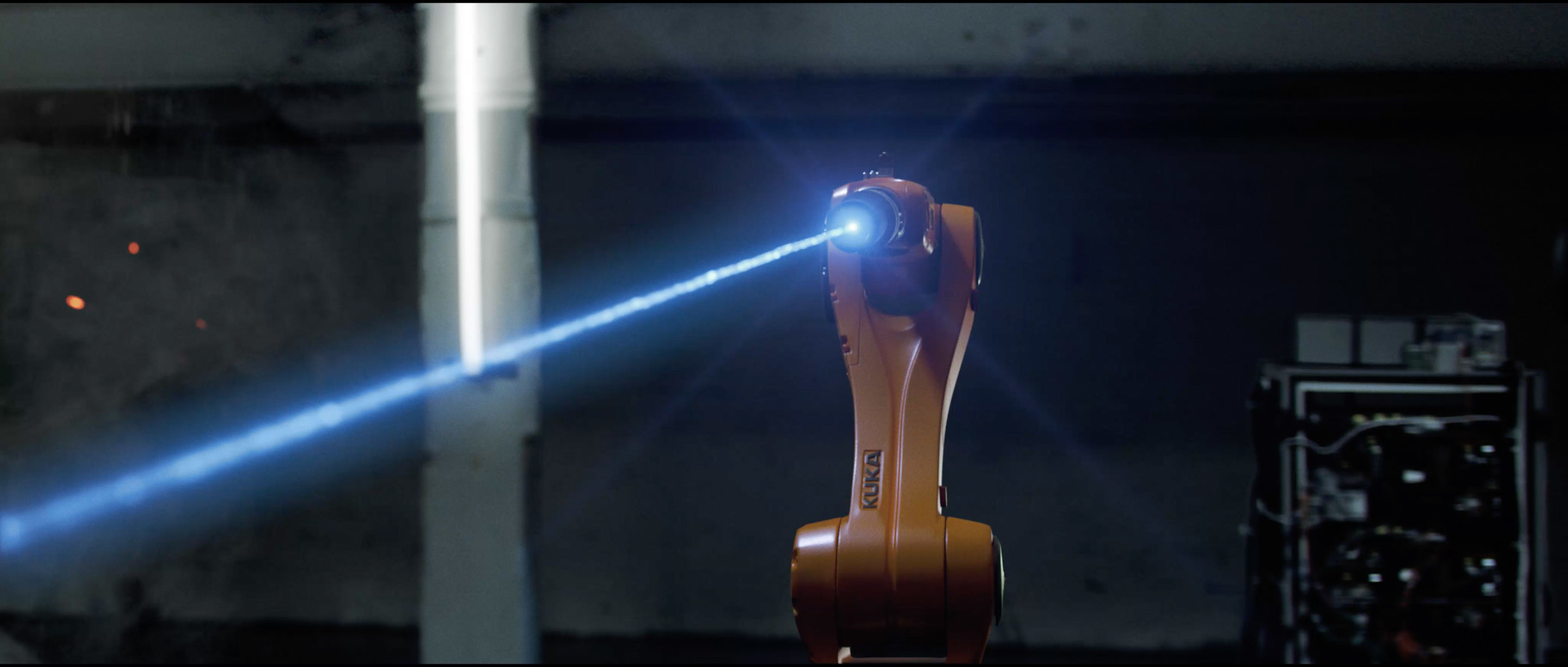

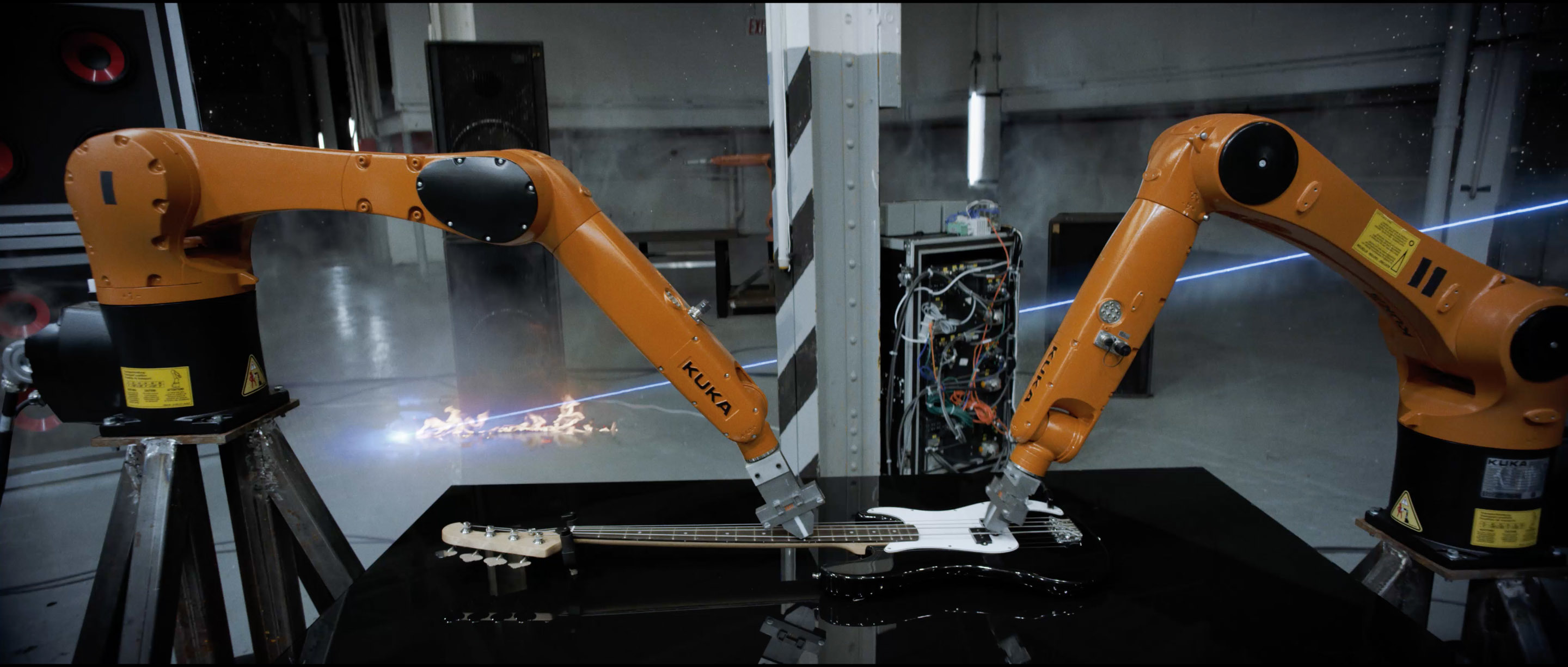

As in “Cymatics,” Stanford appears in “Automatica” in the part of a “mad scientist of music,” but the real star of “Robots Vs. Music” is the Kuka Robotics Agilus Sixx — a compact, six-axis industrial robot arm usually employed for such functions as packing boxes or milling plastic pipes. With “Automatica,” Stanford wanted to see if he could program the robots to play the drums, piano, and electric bass and guitar, and to mix and scratch on a pair of DJ turntables.

The production employed three Agilus robots, but the script called for nine in total — some armed with instruments, others with cutting lasers — an order fulfilled through a mix of clever editing and visual effects.

“Our end goal was to create visuals that are intricately tied to the corresponding audio, and a camera that enhances that sensation through motivated moves,” says Daud. “Timur had to juggle all of the mathematical requirements for every shot while still trying to make our orange robots look sexy.”

Each Agilus Sixx weighs 110 pounds, is capable of carrying a payload of 13 pounds, and moves fast enough to accommodate high-speed assembly-line work or laying down a sick drum solo. But to accomplish any musical performance in real time would have required the filmmakers to bolt the robot to the floor; otherwise, the torque required to move the arm fast enough would flip the robot out from under itself.

“Our robots were sitting on movable bases, so they had to be moved super slow — 1/4 speed, 1/8 speed, 1/10 speed,” explains Civan. “We’re essentially shooting time-lapse footage.” The cinematographer chose to shoot the video in 6K full-frame Recode Raw, at 3:1 compression for stop motion and 5:1 for real time, with Arri/Zeiss Ultra Primes and a Red Epic Dragon belonging to Stanford. A 900-pound 12' Gazelle motion-control crane allowed him to sync his camera moves with those of the robots, and enabled the compositing of elements that had been captured at different frame rates for playback at a 25p time base.

“Automatica” was shot over the course of five days — which included a day and a half of prelighting and prep — at a Brooklyn warehouse off Atlantic Avenue. The filmmakers approached each shot by breaking it down into discrete elements, in descending order of control: the Gazelle speed, the Agilus speed, the lighting cues, and the camera frame rate. “The video was storyboarded top to bottom,” says Civan. “Every single shot was planned out. It had to be.”

Provided by Alex Fernbach at Cobalt Stages, this particular Gazelle arm was an older model that used only stepper motors instead of the combination of steppers and servos found in newer models, so its capabilities were better suited for smaller, slower moves required by tabletop work. “If you push it past its limits, it grinds to a screeching halt,” says Civan.

Civan used a Sony Alpha a5000 mirrorless APS-C camera with a handgrip and Metabones PL adapter as a makeshift video viewfinder to help him set the Red Epic’s positions at various points along each move. Gazelle operator Alexandra Menapace then used a PC terminal running Kuper Controls’ command-line software to program the camera-dolly and jib-arm key frames in real space, later applying a log curve that smoothed out the path between them. The Epic itself was mounted to a Lambda remote head, which Menapace operated independently of the Gazelle arm.

For every shot in which he appears, Stanford is always a real-time element, appearing to duck around and interface with the robots — although he was shot separately, against a greenscreen backdrop. (“If one of those Kukas actually hit him, it’s lights out,” Civan remarks.) A timecode trigger synced the song with the motion-control moves, and the Dragon’s sync pulse locked the motion-control dolly to the camera’s shutter. Using the motion-control rig’s maximum real-time speed to set the pace, the filmmakers calculated each move in feet per second, then programmed the robots to operate at a matching speed.

“Every shot needed careful coordination of each of these elements before we could roll,” says Daud. “There were often long periods where Nigel, Timur, Allie and myself would sit around with calculators figuring out at exactly which frame an event needed to trigger.”

Twelve 4' tungsten Kino Flos were mounted to the warehouse’s 12 support pylons to add depth to the frame. Every light on the set was DMX controlled through a column of cables 4' wide running back to a dimmer board, enabling the filmmakers to not only build precise lighting cues, but to speed them up or slow them down in 1/100 of a second increments.

“The lighting was a hell of a lot of fun,” says Civan. “Our gaffer and B-cam operator, Bill Amenta, said he’d hung more space lights on this shoot than any other in his life, close to 44 6K units.” The lighting, robots and live-action elements were all orchestrated to the music, then each was shot at a different time base. The only lamps not connected to the dimmer board were a handful of 2K tungsten PARs and Fresnels, as well as two 10K Fresnels for the high-speed shots.

“It was a dance between setting the T-stop, filtration, frame rate and shutter speed,” says Civan, who used Formatt-Hitech Firecrest ND filters. The Epic utilized a legacy shutter-angle mode, which meant that a 180-degree shutter was always enabled, creating a consistent motion blur across the different frame rates. “Our DIT, Tom Wong, watched the waveform to make sure each shot matched,” says Civan. “Because the background is a flat gray we knew where the exposure should fall. Even ungraded, I think it’s pretty even from shot to shot. In the final grade, the only thing we changed was the brightness and contrast.”

Over the course of the video, the robots appear to malfunction and, as Civan describes it, “get all Pete Townshend” on the their musical instruments. At this point, the electric bass is smashed through a glass table and onto the concrete floor beneath. Because the Agilus couldn’t move fast enough to actually smash the guitar, it was shot slowly swinging the bass down to ground with no table in the shot; then the glass table was moved into frame and a bolt gun was used to shatter the table while a Phantom Flex4K captured the action in raw format at 500 fps. The close-up of the guitar smashing into the ground was also shot with the Phantom. “You can see in the reflection of the breaking glass that we had all six lights on because we were shooting high speed,” Civan points out.

Civan also used the Phantom to capture the destruction of an antique baby grand piano at 750 fps. The Agilus’ playing builds to a crescendo until the arms come crashing down through the keyboard. A special-effects crewmember hid behind the piano and slammed a wooden two-by-four against the linkages connecting the keys to the hammers, causing the keys to fly upward off the board in a rippling wave pattern, rolling from the lowest to the highest note. An effects pyrotechnician with J&M Special Effects then activated a charge that blew the side off the instrument in a shower of dust and splinters.

All of this carnage was enhanced by visual effects artists at Ukraine’s Digital Axis, who added smoke, fire, lasers and the collapse of the warehouse at the end of the video. For the final shot, the camera pulls back to reveal Stanford standing amongst the wreckage, surrounded by nine Agilus robots in a single composite shot. Stanford graded “Automatica” himself, working with FilmConvert — a color-correction software tool that he helped develop — at 4K for delivery to the web.

Civan considers “Automatica: Robots Vs. Music” to be the most technically challenging shooting he’s ever done. “Every project begins with the creative, but sometimes you have to get really technical to achieve something really beautiful,” he muses. “You can't have one without the other.”